Visual SLAM Mapping

A project to help develop the mapping systems of an autonomous racing car.

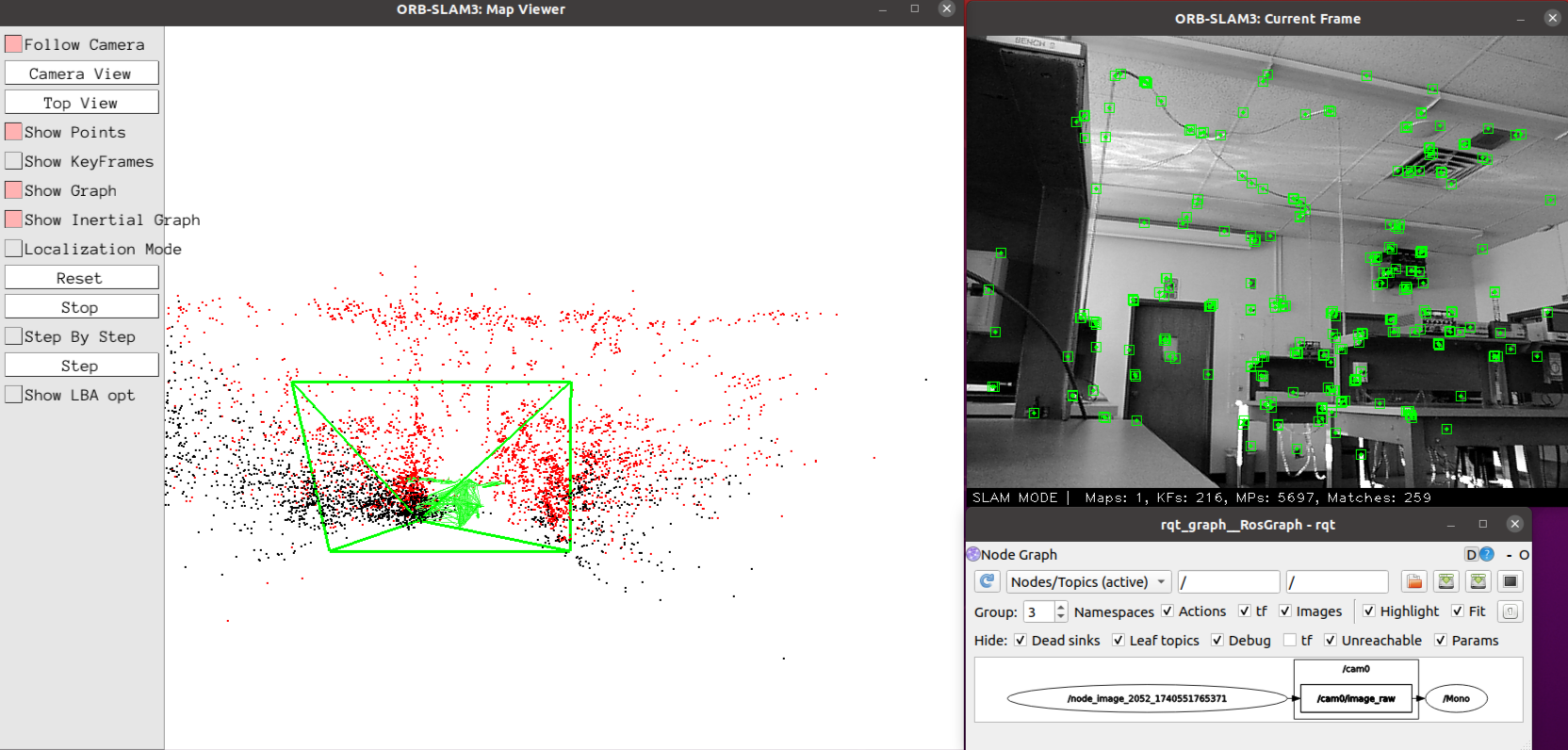

This is a project to research solutions for mapping systems for Roboracer (formerly known as F1Tenth). Focusing on visual SLAM (Simultaneous Localization and Mapping), which uses a camera feed to plot key features and create a local map for autonomous navigation. Primarily uses ORB-SLAM3’s visual SLAM algorithm. Uses Robot Operating System (ROS) for standardized data transfer.

My contributions to this project involvesbeing able to run ORB-SLAM3 on a Raspberry Pi 4 on Ubuntu 20.04, getting the point cloud. Video feed is captured with a Playstation Eye. Further research is to be done on turning this point cloud into a more refined, outlined map, and to determine which factors affect performance the most (camera model, computer, etc.)

Parts List and Software

- Raspberry Pi 4 (Ubuntu 20.04)

- Playstation Eye

- ORB-SLAM3

- USB Keyboard

- USB Mouse